A persistent feature of science-fiction and fantastical stories from King Kong to Godzilla, to recent films like Rampage is the animal that is suddenly made much larger. Of course in real life it doesn’t quite work this way [1]. For every animal there is an approximately correct size - not optimal, since even within a species there are variations, but most convenient. A large change in size inevitably carries with it a change in form. The reasons for this are rooted in physics and materials science. Let’s consider a more human example from the classic pulp sci-fi movie “Attack of the 50 Foot Woman”. At 50 feet tall the protagonist is roughly ten times taller than a typical person, but is also ten times wider and thicker, so their weight would be one thousand times greater than their normal size. Unfortunately for Nancy Archer (the titular 50-foot Woman) the cross-section of each leg bone is only one hundred times greater, so her legs have to support ten times more mass per unit area than they currently do. Human thigh-bones break under about ten times human weight, so Nancy would break her legs with each step she took. Even humans which are not fantastically large but merely unusually so, for example those who suffer from gigantism, often have multiple health problems arising from the added strain on the skeletal and circulatory system.

Different forces affect animals in different ways depending on their size. Insects which weigh very little have no fear of gravity. They can fall almost any distance without danger, and cling upside down with relative ease. A mouse can fall from a tall building and walk away from it, whereas a rat is killed and a human is killed and unrecognizably broken. This is because air resistance is proportional to the surface area of a moving body. A ten-fold reduction in size results in a thousand-fold reduction in weight, but only a hundred-fold reduction in surface area. This mass-to-surface-area relationship acts in the opposite way with respect to keeping warm, and favours larger animals. Warm-blooded animals at rest lose roughly the same amount of heat from a unit area of skin (with some adjustment for insulation like fat and fur). To provide this heat they need to eat, so it is not surprising that warm-blooded animals eat in proportion to their surface area, not their mass. A mouse must eat about a quarter of its body-weight in food every day, most of which goes to keeping it warm. Cold climates favour large and well-insulated animals like bears, seals and walruses.

Although large animals are imperiled by gravity there is one force that size gives us immunity to, but which is perilous to smaller animals. Due to surface tension, a human getting out of the bath carries a film of water fractions of a millimeter thick which weighs less than half a kilogram. Mass, area, and surface tension mean a wet mouse carries about its own weight of water. A fly has to lift many times its own weight, effectively trapping it, if it gets wet.

Photo Courtesy Sandra-Photographie

Photo Courtesy Sandra-Photographie

Surface area forces other important considerations in the physical structure of animals beyond just the ease with which they can drink. Microscopic animals like rotifers and tiny worms don’t have lungs. Instead they get all the oxygen they need directly through their skin which has enough surface area for all the oxygen they need to soak in. They also have a straight gut which has enough surface area to absorb nutrients. If this microscopic animal increases ten times in size its weight increases a thousand times. It will need a thousand times as much food and oxygen per day to maintain the same level of function as its regular-sized counterpart, but the surface area of its gut and skin will have only increased a hundred-fold. Ten times as much oxygen needs to enter through its skin and intestines per unit area. Once the limits of absorption have been reached there needs to be some structural change to support increased surface area, like lungs or gills. Comparative anatomy is largely the story of the struggle to increase surface in proportion to volume. When viewed from this perspective many of the organs in the human body look like hacks to get around changes in scale, such as the lungs which manage to pack 50 - 75 square meters of surface area into our chest cavities, or intestines which are over 7 meters long and coiled through our abdomen and covered internally with finger-like nodules to increase their surface area.

Large animals didn’t grow larger because they had become more complicated, they had to become more complicated because they’d grown larger.

These lessons of scale apply equally to organizations. A two-person company doesn’t need an HR department, but a thousand-person one probably does. The processes used by organizations also reflect their scale. If you’ve ever gone from a small to a large company or vice-versa the differences can feel disconcerting, liberating or alien. No-one working for a small start-up or on a small project longs for big-company process or bureaucracy, but yet strangely they often seem to adopt the same tools, infrastructure and architectural patterns of large and successful companies. In doing so they overlook one of the biggest advantages of being small - the ability to keep things simple. Microscopic worms don’t develop a circulatory system just in case they grow to the size of a human, but yet software systems are often built with components that are far in excess of what is required for the current scale they need to operate. This is because in the virtual world these kind of design choices are not as overtly visible. If you saw a mouse with the large, robust legs of an elephant it would be immediately obvious that this was an animal that could not possibly survive. If you were contracted to build a hotel and built a laundry system that was thousands of times larger than what was required it would be obvious to everyone, and your hotel construction days would probably be over. But in the virtual world it is easier to justify this bullshit. It is not uncommon to hear of software systems being built with incredible fault tolerance and scalability requirements that are totally out of proportion with their current level of use.

The Road To Hell, Good Intentions, and your Microservices Cluster Supporting 100 Users

I Am an Old Man and Have Known a Great Many Troubles, But Most of Them Never Happened [2]

So why do we do this? Why do we take on these additional burdens and add unwarranted complexity to our systems for troubles that we don’t yet have? What about YAGNI? I think there are a number of related reasons at play. Many exceptional engineers work at large, high-profile companies. These engineers are working on things that do need to scale. The software equivalent of figuring out how to build the circulatory or respiratory system of some giant animal. It is natural that we look up to these people. They are solving interesting problems and are at the cutting edge of software engineering. In years gone by we probably wouldn’t know their names or their stories, but thanks to social media now we can. In days-gone-by to use the cutting edge stuff they were using might have come with a huge price-tag from Oracle or SGI, or require some crazy hardware from Cisco, and that would have been more-or-less the end of that. But thanks to open source we can now not only see their code we can use it “for free”. This is great if we can truly adopt this library, platform or tool at low or no cost. But more often than not these costs are downplayed, not recognized, hard to quantify, or glossed over. Design is all about trade-offs, and in many cases the trade-off for “better” designs is increased complexity.

If we’re building something for ourselves it is also easy and seductive to believe in the fantasy that what we’re building is going to be so successful that we will damn well NEED those microservices to be clustered in Kubernetes. If we don’t the VCs we haven’t met yet will be clamouring for heads to roll, and all the users we haven’t attracted yet will post snarky messages to their friends about how our awesome service they just can’t get enough of is down, again. You can practically feel the eye-rolls from here.

If we’re building for someone else there is the equally seductive myth that we’re setting them up for great success in the future. No-one wants to be accused of painting their customer into a corner by limiting their growth, so instead we create more and more complex edifices, in the name of future-proofing. This tendency isn’t particularly new. In 2003 Martin Fowler wrote his First Law of Distributed Object Design which is “Don’t distribute your objects!”

while many things can be made transparent in distributed objects, performance isn’t usually one of them. Although our prototypical architect was distributing objects the way he was for performance reasons, in fact, his design will usually cripple performance, make the system much harder to build and deploy—or both. A procedure call within a process is extremely fast. A procedure call between two separate processes is orders of magnitude slower. Make that a process running on another machine, and you can add another order of magnitude or two, depending on the network topography involved.

Fifteen years later we no-longer try to make objects truly location-independent with the same interfaces regardless of whether they are accessed locally or not, but we tend to ignore or play down the performance costs of out-of-process communication, and the added difficulting in building and deploying distributed systems. We should only take on these burdens if our problems truly demand it, not because we think that we’ll need it in the future.

What about Instagram?

One in a hundred-thousand times software shows that it is special and isn’t bound by the laws of physics like the rest of the world is, and you get something like instagram, which DID grow at an incredible rate. Instagram hit 1 million users in 3 months, and in the early days co-founder Mike Krieger had to carry around his laptop to re-boot the site in case it collapsed under the load. I’m sorry to burst your bubble, but in spite of how attractive the fantasy of this kind of growth is it is incredibly unlikely. Even if your shiny new thing does achieve great success it is probably going to come far more slowly while you achieve what venture capitalists call product-market-fit. This is the process where you adapt your product as you grow to better understand what your customers want. One of the best ways to keep your software easy to change is to keep it simple. Until you start seeing that insta-like hockey-stick growth your best bet is to keep things as simple as possible. The good news is that if you can do that then scaling should be “easy” for you (for certain values of easy) because simple systems have a small number of moving parts, and are easier to reason about. We would do well to remember the “law” coined in 1975 by systems thinker John Gall that bears his name:

A complex system that works is invariably found to have evolved from a simple system that worked. A complex system designed from scratch never works and cannot be patched up to make it work. You have to start over beginning with a working simple system

Facebook, Google, Instagram, Twitter, AirBnB, Uber and all the other unicorn start-ups you’ve heard of didn’t start off as complex systems. They grew from simple ones. Having complex architectures and using exotic languages, processes and technologies didn’t allow them to grow. Attracting millions or billions of users allowed them to grow. The complex structure they now require exists in order to support their size, they were not the reason for their growth.

A single server can go a long way

I start with: Do you really need N computers? Some problems really do. For example, you cannot build a low-latency index of the public internet with only 4TB of RAM. You need a lot more. These problems are great fun, and we like to talk a lot about them, but they are a relatively small amount of all the code written. So far all the projects I have been developing post-Google fit on 1 computer. - David Cranshaw One Process Programming Notes

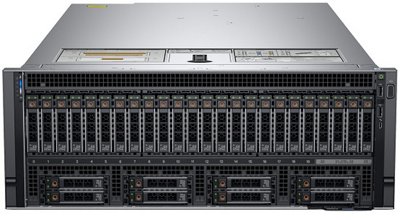

You can do a lot on a single server these days. For a few thousand dollars Dell, HP, or IBM will sell you a dual-Xeon powered rack-mounted server for with 28 cores, half a terabyte of RAM and a pair of NVMe SSDs capable of GB/s of IO. At the high-end (and for considerably more money) you can get 4U server with 96 cores, and 6TB of RAM. There are a lot of workloads that could fit on a server like that. The “total cost” of a server isn’t just the sticker price you pay for it when you purchase it, but buying something like this if and when your workload warrants it might be a much more deterministic path to scaling up to your user needs. Certainly it is a better approach than spending engineering time and effort adding complexity in anticipation of future scalability needs. If you’re reading this post some time after it was published the even better news is that the server you can buy now will be even faster and cheaper.

Simple

When you’re operating at a small scale the problems you face like attracting your first hand-full of users, managing scant resources or delivering that client project on time are different to the problems you face when you grow much larger. Don’t solve size-related problems you don’t have. In truth, nature doesn’t really architect for anything, but by grafting the parts of a huge software system onto a small one you create a beast that is ill suited to its environment and, all other things being equal, will be out-competed by something more balanced. Software design can be seen as a trade-off between doing what is fast and expedient now against what is prudent and right in the long-term. Keeping your design as simple as possible helps both the here-and-now, and the future. Simplicity is one of the best strategies for coping with change because simple systems have less moving parts. They are easier to reason about. They are easier to build and deploy. They are easier to debug and monitor. And they are easier to modify, including scaling them up, should the need arise.

Portions of this article are adapted from J.B.S. Haldane’s 1928 essay On Being the Right Size.

[1] Giant shark movies like Jaws and “The Meg” are probably the exception to this.

[2] This saying has been attributed to many people. As usual Quote Investigator does a good job of tracing the origins of this quote.